center

for extended magnetohydrodynamic modeling

A Proposal referencing Program Announcement LAB01-10

Scientific Discovery through Advanced

Computing:

Advanced Computational Research in Fusion Science

Office of Fusion Energy Science

U. S. Department of Energy

15 March 2001

Principal

Investigator: Laboratory

Official:

Stephen C. Jardin Rich

Hawryluk

Principal Research Physicist Deputy

Director

Princeton Plasma Physics

Laboratory Princeton

Plasma Physics Laboratory

609 243-2635 (voice) -2662 (fax) 609

243-3306 (voice) –2749 (fax)

email: jardin@pppl.gov email:

rhawryluk@pppl.gov

- - - - - - - -

- - - - - - - - - - - - -

- - - - - - - - - - - - - - - - - - -

Requested Funding : FY 2001: $1,000,000

FY 2002: $1,147,000

FY 2003: $1,222,000

Co-PIs and Senior Personnel:

PPPL: Guoyong Fu, Wonchull

Park SAIC: Scott

Kruger, Dalton Schnack

MIT: Linda E.

Sugiyama LANL: Rick

Nebel

NYU: Hank

Strauss U. Colorado: Scott

Parker

General

Atomics: Dave Schissel Utah State U.: Eric Held

U.

Texas (IFS):

Frank Waelbroeck

U.

Wisc: Jim Callen, Chris Hegna, Carl Sovinec

Note: Institutional contacts underlined.

No human subjects or vertebrate animals are to be used in this project.

Table of Contents

Abstract 3

Narrative

Background and

Significance 4

Preliminary Studies 7

Research Design and

Methods 15

Subcontract or

Consortium Arrangements 28

Literature Cited 29

Budget and Budget Explanation 31

Other Support of Investigators 33

Biographical Sketches 34

Description of Facilities and Resources 38

Appendix A: Plasma Fluid Models 39

Appendix B: Collaboration Letters 41

Appendix C: The Software

Framework

Appendix D: Institutional Budget

Pages

Appendix E: Deployment Tables

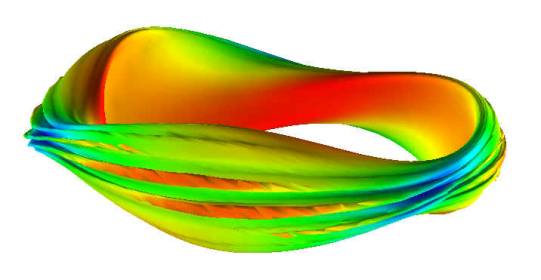

Figure 1:

3D MHD simulation shows that a toroidally localized ballooning mode develops in

the LI383 stellarator design when the design beta limit is exceeded. The equi-pressure surface reveals the mode

steepening nonlinearly to form ribbon-like structures. The color represents a particular flux

variable. [M3D calculation by Strauss and Park]

- Abstract -

The proposed Center for Extended Magnetohydrodynamic Modeling will enable a realistic assessment of the mechanisms leading to disruptive and other stability limits in the present and next generation of fusion devices. The main work involves extending and improving the realism of the leading 3D nonlinear magneto-fluid based models of hot, magnetized fusion plasmas, increasing their efficiency, and using this improved capability to pioneer new tera-scale simulations of unprecedented realism and resolution. This will provide new insights into some of the most critical and complex phenomena in plasma and fusion science. The proposed work builds on the established research teams that have developed the NIMROD and M3D codes. Activities are proposed in the following areas: Code Development -- improving the computational performance, implementing extended MHD models, and validation of the numerical algorithms; Model Development -- refining numerically tractable physical models for extended MHD (XMHD) and testing their efficacy; Visualization and Data Management -- providing visualization and data handling capabilities; Computer Science Enabling Technologies (CSET) -- which consists of several supporting computer science projects; Code Support, consisting of disseminating the code capability to the community, and supporting the developing user base; Applications and Validation – applying and validating the underlying models through cross-code and experimental comparisons.

1. Background and Significance

1.1 Overview

The proposed Center for Extended Magnetohydrodynamic Modeling (CEMM) will develop and deploy predictive computational models for the study of low frequency, long wavelength fluid-like dynamics in the diverse geometries of modern magnetic fusion devices. These models will be used to extend our fundamental scientific knowledge of the nonlinear dynamics of hot, magnetized plasmas. This will further the programmatic aims of the magnetic fusion energy program by providing fundamental insight into the processes that cause plasmas to undergo spontaneous transitions from states that are stable and confined to ones that are unstable and disruptive, or that exhibit poor plasma confinement.

The proposed Center consists of three central elements: existing and evolving large-scale simulation codes, theoretical model development, and state-of-the-art computer science support for algorithmic solvers, visualization, and data management. The first CEMM element brings together the two established computational research teams that have developed the NIMROD and M3D codes, and whose members have considerable experience in the development and application of plasma fluid models. Each of these major codes has demonstrated scalable performance on present massively parallel architectures, and both codes are ready to take advantage of new supercomputer technology as it emerges. The theoretical CEMM element will seek to elucidate the extensions to the resistive MHD model that are required to describe the dynamics of modern fusion plasmas. We call these extended models “extended MHD” or “XMHD”. Unlike independent theory, this effort will focus on casting the resulting equations in a computationally efficient form for implementation. The supporting computer science CEMM element will emphasize the development and implementation of mesh generators, discretization packages, linear algebra algorithms, specialized visualization tools, and data base structures that improve the code efficiency and enhance the manipulation and analysis of the resulting data. Rather than being located at a particular institution, the proposed CEMM will be an inter-disciplinary, multi-institutional team. This facilitates obtaining the required technical expertise from the appropriate places both within and outside the existing fusion program. The majority of our team has collaborated successfully under the Office of Fusion Energy Science PSACI pilot project; we have experience in such a distributed technical effort.

The Center has made a conscious decision to retain two code lines, M3D and NIMROD. The details of these codes are described in Section 2.3. These codes overlap considerably in their capabilities; the fact that they have been developed separately and use very different numerical representations allows them to serve as essential independent checks on one another. They also have their own unique strengths. The NIMROD code utilizes a Fourier representation in the toroidal angle and emphasizes the development of strongly implicit, highly parallel computational schemes. The M3D code uses a 3D mesh and a real space representation. It is better suited to problems with inherently three dimensional boundaries such as stellarators. Recent developments enable these codes to share data storage and visualization systems and software. These developments, achieved through the PSACI pilot project, will be extended in the CEMM to include the sharing of physical models and numerical methods.

Details of the proposed Center for Extended MHD Modeling are given in the body of this proposal. The remainder of Section 1 provides the programmatic motivation for the establishment of the CEMM, and it describes the compelling scientific challenge to be addressed by the Center. Section 2 contains: detailed background information, including discussions of the difficulty of the physics problem at hand, the formidable computational obstacles that must be overcome, and the present status and capabilities for the simulation of extended MHD. The specific technical approach of the proposed effort is given in Section 3. A brief description of several targeted application problems is given in Section 4. A detailed management plan is presented in Section 5.

1.2 Programmatic Motivation

The magnetic fusion program is presently embarked on two major development paths. One is to explore the physics of burning (fusing) plasmas. The other is to investigate the basic plasma confinement properties of a wide variety of innovative fusion reactor concepts. A large component of the estimated costs of both development paths is caused by the uncertainty in the dynamical behavior of the plasma. Under some operating conditions, an experiment can spontaneously transform from a stable system exhibiting good confinement into one that exhibits poor confinement or becomes unstable and disruptive. The responsible physical phenomena are: usually long wavelength and nonlinear, characterized by extreme anisotropy in their spatial structure, and can evolve on time-scales that are much longer than the Alfvén wave transit time. Very little of predictive value is known about them, particularly their nonlinear behavior. Because of budget realities, few experiments can be built to investigate even the key physical phenomena. Therefore, the critical physics issues and designs for each development path must be precisely identified before funds are committed to construction and operation. This requires increasing our knowledge and predictive ability of the critical nonlinear physics phenomena.

Numerical simulation can provide a key component of this effort, since computations are performed at a tiny fraction of the cost of an experiment. However, the computations must solve a physical model that accurately reproduces and predicts laboratory results. To be effective, the simulations must be combined with a well-focused, well-diagnosed experimental physics program to guide model development, and to assure the validity of numerical results. Recent developments in plasma theory, computational physics, and computer science, along with anticipated advances in computer hardware performance, combine to make this simulation capability a very attractive option in the near future. Developing and deploying this capability will be the focus of the CEMM.

1.3 The Scientific Challenge

The low frequency, long wavelength dynamics of hot, magnetized plasmas are not well understood for two reasons:

- Theoretical: The only practical means of describing low frequency global motions in real geometry is with a predominantly fluid model. The traditional magneto-hydrodynamic model (MHD) provides a good description of ideal and almost-ideal instabilities. A key challenge for present-day fusion research, however, is to predict and control instabilities that have much slower growth (see applications Sec. 3.5) but that dominate most experimental discharges. For slow modes, effects that are omitted in the MHD model due to finite Larmor radius, pressure anisotropy, and some long mean-free-path effects, become important. The theoretical challenge is to extend the standard MHD model to include the relevant effects while preserving computability.

· Computational: The physics problems that are important to fusion science are among the most daunting problems in computational physics. Macroscopic evolution of the confining magnetic fields can occur over time-scales that are orders of magnitude longer than those of the normal modes of the MHD model. Extended MHD introduces two-fluid modes and particle streaming at time-scales that are yet orders of magnitude faster than that of the MHD modes. Furthermore, both spatial structure and dynamics are fundamentally different perpendicular and parallel to the magnetic field. These properties of extreme separation of time and spatial scales, and extreme anisotropy combine to present a difficult computational challenge—one that is at least as difficult and scientifically compelling as the computation of fluid turbulence.

1.4 Proposed Center for Extended Magnetohydrodynamic Modeling (CEMM)

The programmatic need for a predictive simulation capability, and the theoretical and computational challenges involved in its development and deployment, combine to make a compelling case for bringing our best computational, theoretical, and computer science capabilities to bear on this problem. To this end, we propose the establishment of the Center for Extended MHD Modeling (CEMM). The Center will be interdisciplinary and multi-institutional, and will draw on expertise from both within and outside the present fusion science program. The Center will have six important components that will collectively enable the new Terascale applications:

· Advanced, parallel, scalable code development building on the strengths of the NIMROD and M3D codes;

· A synergistic theoretical and computational model development effort to distill the essential physics from the kinetic plasma description and to elucidate computationally efficient extensions of the appropriate fluid equations.

· The development of advanced visualization techniques and data base management for the efficient display and analysis of the four dimensional data produced by the computational models and to facilitate comparisons between NIMROD and M3D simulation results and experimental data.

· A computer science enabling technologies (CSET) program to implement specialized numerical algorithms and simulation components to improve the efficiency of the computational models, and to help manage code complexity and reduce development time.

· A code support effort to effectively disseminate these computational models to the fusion and general science communities.

· An aggressive applications and validation effort to compare the results of the two codes NIMROD and M3D: with one another, with analytic limiting cases, and with experimental data—the ultimate test;

Present budgets no longer allow the luxury of assembling such a group at a single institution. Instead, CEMM will be assembled from the staffs of many diverse institutions that participate in the fusion and computer science programs. (Note that the “pure” theory and experimental efforts will be funded out of the mainline OFES theory and experimental programs, and not from SCIDAC. We include them here because they are an integral part of the program. Similarly the majority of the CSET effort is funded separately as discussed in Sec. 3.4)

2. Preliminary Studies

2.1 Experimental Observations in Hot Fusion Plasmas

Modern

fusion plasma experiments are rich in low frequency, long-wavelength electromagnetic,

i.e. MHD-like, activity. An example is

discharge number #86144 from the DIII-D experiment at General Atomics

Corporation in San Diego. This hot

plasma has a Lundquist number S (the ratio of resistive diffusion time to

Alfvén wave transit time) of about 108 and a magnetic Prandtl number Pr (the

ratio of the resistive diffusion time to the perpendicular viscous diffusion time)

of about 100. This discharge illustrates

much of the important nonlinear physics alluded to in Section 1.2 that will be

addressed by this proposal.

Modern

fusion plasma experiments are rich in low frequency, long-wavelength electromagnetic,

i.e. MHD-like, activity. An example is

discharge number #86144 from the DIII-D experiment at General Atomics

Corporation in San Diego. This hot

plasma has a Lundquist number S (the ratio of resistive diffusion time to

Alfvén wave transit time) of about 108 and a magnetic Prandtl number Pr (the

ratio of the resistive diffusion time to the perpendicular viscous diffusion time)

of about 100. This discharge illustrates

much of the important nonlinear physics alluded to in Section 1.2 that will be

addressed by this proposal.

Time traces of several experimental

diagnostics are shown in Figure 2. The top trace shows power injected from a

beam of energetic particles. The second

trace shows a normalized measure (b) of

the internal energy. The third and

fourth traces show two different toroidal harmonics (n = 2 and

n = 1) of the helical perturbations in the magnetic field. The bottom trace is a spectroscopic

diagnostic that responds to activity at the edge of the plasma.

Time traces of several experimental

diagnostics are shown in Figure 2. The top trace shows power injected from a

beam of energetic particles. The second

trace shows a normalized measure (b) of

the internal energy. The third and

fourth traces show two different toroidal harmonics (n = 2 and

n = 1) of the helical perturbations in the magnetic field. The bottom trace is a spectroscopic

diagnostic that responds to activity at the edge of the plasma.

For the first 2250 milliseconds (ms) of the discharge, the plasma internal energy increases in response to the energy injected by the neutral beam. The plasma also exhibits quasi-periodic oscillations in the n = 1 magnetic signal (called sawtooth oscillations). During this phase, the discharge is a steady state system with good plasma confinement. After 2250 ms things change dramatically. An n = 2 magnetic perturbation appears and grows to large amplitude, while the n = 1 oscillations continue unabated. The internal plasma energy now fails to increase in response to increases in the injected neutral beam power, and the edge D-alpha fluctuations occur much more frequently. The growth of an n=2 magnetic perturbation has been identified as a neoclassical tearing mode instability. At 3450 ms the disappearance of the magnetic signals indicates that the large, primarily n = 1, perturbations have "locked" to the wall of the device. A sudden and premature termination of the discharge quickly follows at 3900 ms. The confinement properties of the plasma have obviously been drastically altered by the events that began at 2250 ms. The phenomenology of these events and their evolution are not well understood.

Nonlinear simulations with the NIMROD code using conventional resistive MHD did not reproduce the salient features of the discharge [Gianakon99], even with dimensionless parameters approaching those of the experiment. Subsequent analysis determined that extended MHD physics underlies much of the dynamics. Successful comprehensive simulation of experiments such as DIII-D #86144 requires simultaneous modeling of

· realistic geometry to accurately capture the global nature of important macroscopic modes,

· high S and low viscosity conditions to ensure adequate separation of temporal and spatial scales,

· the ability to perform long time-scale simulations to follow the nonlinear evolution of the equilibrium configuration,

· realistic edge and boundary conditions, including a vacuum region, a moving plasma boundary, and a resistive wall to follow edge fluctuations and diffusion of magnetic perturbations into the wall,

· anisotropic heat flux and finite gyro-radius and banana-width effects to capture the nonlinear neoclassical island-size threshold,

· two-fluid effects for drifts and electron-physics modifications to global modes,

· kinetic extensions to reproduce free-streaming effects, and

· energetic particle effects to capture the growth rate modification of the sawtooth oscillation.

Considerable progress has already been made by the NIMROD and M3D teams in several of these areas. The objective of the proposed Center for Extended MHD Modeling (CEMM) is to bring all of these components together, to obtain a fundamental understanding of the nonlinear physics that limits performance in the most advanced fusion experiments.

2.2 Physics Background

Here we discuss the present status of the plasma simulation models referenced in this proposal, and give an overview of the physical effects to be addressed.

2.2.1 Plasma Models

Plasma dynamics are completely described by the evolution of

the distribution function ![]() , for each particle species a as given by an

appropriate plasma kinetic equation of the form

, for each particle species a as given by an

appropriate plasma kinetic equation of the form ![]() , where

, where ![]() is a complicated collision

operator describing collisions between species a

and b. This kinetic equation must be solved consistently

with the evolution of the electric and magnetic fields, using Maxwell’s equations. For applications to real plasmas, this evolution

must be obtained in a realistic experimental geometry. In general, such a full kinetic

electromagnetic description is both analytically impossible and

computationally impractical.

is a complicated collision

operator describing collisions between species a

and b. This kinetic equation must be solved consistently

with the evolution of the electric and magnetic fields, using Maxwell’s equations. For applications to real plasmas, this evolution

must be obtained in a realistic experimental geometry. In general, such a full kinetic

electromagnetic description is both analytically impossible and

computationally impractical.

There are two general categories of three-dimensional plasma simulations in current use: resistive MHD and electrostatic turbulence. The first category, resistive MHD (or often just called MHD), uses the model given in Appendix A as Eq. (A1a-f) with a single scalar pressure (i.e., P = 0). This is a common and useful approximation that is only strictly valid for plasma motions at the Alfvén velocity and in the high collisionality and small gyroradius limit. These assumptions are not satisfied in fusion plasmas; thus we can expect the MHD model to give at best only qualitative results. The second category of simulations, electrostatic turbulence, treats the ions using a gyrokinetic (or gyro-Landau-fluid) description; but it assumes that the magnetic field is static and that the electrons respond adiabatically. This model is useful for studying short spatial scale turbulence that is responsible for most anomalous transport in fusion devices; but is not useful for studying global “MHD” phenomena that require a full treatment of the magnetic field. [Note: Activity is underway in the electrostatic turbulence category to partially remove some of these restrictions. However, current work focuses on modeling fine scale dominantly electrostatic turbulent transport].

The thrust of the present proposal is to extend the resistive MHD category by addressing a more complex set of equations. The resultant extended MHD models will generically be denoted “XMHD”. These extended models lead to simulations that are improved in two ways. The first improvement is quantitative: the simulated plasma motion and growth rates for MHD dominant modes should be in better agreement with the experimental values. A more fundamental improvement, however is qualitative: XMHD simulations will be able to model entirely new classes of plasma phenomena.

The XMHD models discussed in this proposal fall into two general categories that will be discussed further in the next two sections. They are:

Two-fluid XMHD models with fluid-like equations for the electrons and the ions where the difference in their relative velocity and the anisotropy of the pressure tensor are accounted for, and

Hybrid particle/fluid XMHD models with numerically obtained closure relations where some or all of the ion response is obtained from particle solutions of the gyrokinetic equations and the electron response is obtained from fluid-like numerical solutions of the reduced phase space drift-kinetic equation.

Generally, the two-fluid XMHD models are less computationally demanding, but the hybrid XMHD models contain additional physical effects such as nonlinear wave-particle resonance that may be essential in certain classes of macroscopic phenomena. Note that the two models can have overlapping regions of validity; in particular they do if the nonlinear wave-particle resonance is not essential for the phenomena being modeled.

2.2.2 Two-fluid XMHD models

The general form of the two-fluid XMHD model is given in Appendix A as Eqs. (A-1a)-(A-1f), supplemented by Eqs. (A-2) and (A-3). The theoretical challenge is to define the ion and electron stress tensors Pi and Pe and the heat fluxes qi and qe. These terms contain a hierarchy of nonlocal effects, including finite (ion) Larmor radius (FLR) affects (which lead to gyroviscosity) and the effect of long collision lengths parallel to the magnetic field, including magnetic particle trapping (important in neoclassical effects). In the methods we have implemented to date [Sugiyama00, Gianakon96], the neoclassical parallel viscosity terms involving Pi and Pe are approximated using analytic neoclassical closures due to [Hirshman81] and [Callen86], and the ion gyroviscous contribution to Ñ· Pi uses approximations due to [Hazeltine92]. This latter expression is approximate for use in a nonlinear torus, since it assumes a uniform straight magnetic field and a small perturbation from an axisymmetric equilibrium.

2.2.3 Hybrid particle/fluid XMHD models

To model the nonlinear interaction of ions with MHD waves and to include large gyro-orbit and neoclassical effects more self-consistently, we have developed several hybrid methods, where either an energetic ion component or the whole ion population are modeled using the gyrokinetic or drift kinetic equations. In the methods implemented so far [Belova98], the ion fluid velocity is calculated by solving the momentum equation, and the calculated ion fluid velocity is used in the Ohm’s law, assuming quasineutrality. The ion pressure tensor is taken to be in the CGL (Chew, Goldberger, Lowe) form and the gyroviscous part of the stress tensor is calculated from the particles. Hot particle populations, for example fusion-produced a-particles or injected neutral beam ions, can be simulated by combining a gyrokinetic hot particle population with a fluid model for the background. [Park92]

The electron response is traditionally simulated using a fluid response. The proposed research will remove this restriction by further developing a Chapman-Enskog —like procedure for numerically solving the electron drift-kinetic equation [Held01]. This will allow a combination of neoclassical effects and parallel heat conduction effects to be properly modeled in low collisionality, long collision length hot fusion plasmas.

2.2.4 Physical Effects Introduced by Extended MHD

XMHD contains many effects that are absent from standard MHD and important in fusion plasmas.

Drift Waves: Two-fluid effects introduce electron and ion diamagnetic flows and hence drift wave effects into the MHD model. These can have important stabilizing or destabilizing consequences for plasma instabilities. For example, drift wave modifications are important in stabilizing high mode number resistive-MHD-type instabilities in hot fusion plasmas [Diamond86]. Such drift waves propagate radially away from their mode-rational surfaces, but are damped by kinetic processes such as ion Landau damping that limit the extent of their effects. An appropriate ion closure relation [Hendrick92] should be included in the model. It has also been hypothesized that nonlinear effects due to the ion diamagnetic drift can explain the observed stabilization of the internal, nearly-ideal MHD modes that cause sawteeth [Zakharov92].

Plasma Rotation. Extended MHD effects can cause changes in plasma equilibria and their response to rotation. The ions and electrons respond differently to rotational forces, due to their different masses; this generates a radial electric field that may persist in steady state [Bowers71]. In an axisymmetric system, neoclassical dissipation (the ion parallel viscous stress tensor) damps the plasma poloidal momentum. The dynamic effects become important when rotational forces exist. In addition, instabilities experience these effects. Growing magnetic islands rotate poloidally, leading to different self-consistent stabilizing or destabilizing effects.

Viscous Stress Tensor Effects: The higher order moments can drive new

instabilities. For example, the

appearance of ![]() in Ohm’s law,

Equation (A-1f), indicates that there is an electric field driven by the

divergence of the stress tensor. This

electric field drives a current parallel to the magnetic field, called the

bootstrap current, that is proportional to the pressure gradient perpendicular

to the magnetic field. This bootstrap

current exists independent from the applied electric field. These “neoclassical” effects can lead to

important macroscopic behavior known as neoclassical tearing modes

[Chang95] that are thought to set the pressure limits in many long-duration

tokamaks discharges and are not described by the resistive MHD model.

in Ohm’s law,

Equation (A-1f), indicates that there is an electric field driven by the

divergence of the stress tensor. This

electric field drives a current parallel to the magnetic field, called the

bootstrap current, that is proportional to the pressure gradient perpendicular

to the magnetic field. This bootstrap

current exists independent from the applied electric field. These “neoclassical” effects can lead to

important macroscopic behavior known as neoclassical tearing modes

[Chang95] that are thought to set the pressure limits in many long-duration

tokamaks discharges and are not described by the resistive MHD model.

Energetic Ion Component: In high temperature plasmas with a strong energetic ion component (such as in TFTR or JET), internal MHD modes, i.e., sawteeth, have been observed to be stable for extended periods. The net effect of hot particles may be stabilizing or destabilizing, depending upon their relative contributions to the total plasma beta (destabilizing) or the stabilizing kinetic term. There are other important kinetic effects involving the interaction of non-Maxwellian hot-ion particle populations with MHD modes. Among the most studied are the fishbone modes and the fishbone-like modes [Coppi00]. Fast ions can also destabilize toroidal Alfvén eigenmodes (TAEs), a stable normal mode of the ideal MHD spectrum in a torus [Cheng84].

These and other new physical effects contained in the XMHD equations can profoundly affect the dynamics of modern fusion plasmas. Specific applications to be addressed by CEMM are discussed in Section 3.5.

2.3 Computational Background

The CEMM is bringing to this project the two major 3D scalable fluid codes in the U.S. fusion program, NIMROD and M3D. These codes provide the framework within which the extensions in model capability and algorithmic efficiency will occur. This section describes the present status of these two codes and makes some observations on computational requirements for the future.

2.3.1

The NIMROD Code

The NIMROD code [Glasser99] solves the primitive form of the plasma fluid-model equations (A1-3) in axisymmetric toroidal, cylindrical, or periodic-linear geometry with arbitrary poloidal cross-sectional shape. (The geometry must have an ignorable periodic coordinate, but the simulated dynamics are fully three-dimensional.) The user selects which terms are retained in Ohm’s law, Equation (A-2), through an input parameter. The semi-implicit numerical method [Schnack87] is used to advance the solution from initial conditions. This avoids severe time step restrictions associated with wave-like normal modes of the system, sound, Alfvén, and whistler waves—while avoiding numerical dissipation. For accuracy at time steps that are orders of magnitude larger than explicit stability limits, the semi-implicit operator for mass motions is based on the linearized ideal MHD energy integral [Lerbinger91]. Matrix inversion is accomplished by parallel preconditioned Krylov methods, which is the most computationally demanding part of the time advance. Performance is therefore dependent on the effectiveness of the preconditioner [Plimpton99].

The spatial representation of NIMROD is an

important feature of the code. NIMROD

uses a combination of logically quadrilateral and triangular finite elements

for the poloidal plane and pseudospectral collocation for the periodic

direction [Orszag71]. The

The spatial representation of NIMROD is an

important feature of the code. NIMROD

uses a combination of logically quadrilateral and triangular finite elements

for the poloidal plane and pseudospectral collocation for the periodic

direction [Orszag71]. The  polynomials

used for finite element basis functions are selected by the user for optimal

efficiency, and poloidal mesh lines need not be orthogonal. For many fusion problems, accuracy is improved

by aligning grid lines with the equilibrium flux surfaces inside the separatrix. The grid can also be packed around low order

rational flux surfaces to efficiently resolve the small spatial scales that

arise at high S. Triangular meshing outside

the last closed flux surface allows complicated, realistic boundary shapes.

(Fig 3)

polynomials

used for finite element basis functions are selected by the user for optimal

efficiency, and poloidal mesh lines need not be orthogonal. For many fusion problems, accuracy is improved

by aligning grid lines with the equilibrium flux surfaces inside the separatrix. The grid can also be packed around low order

rational flux surfaces to efficiently resolve the small spatial scales that

arise at high S. Triangular meshing outside

the last closed flux surface allows complicated, realistic boundary shapes.

(Fig 3)

From

inception, NIMROD has been developed for implementation on distributed memory

architectures. Grid blocks allow decomposition

of the poloidal plane, and Fourier components are allocated to different layers

of processors, providing three-dimensional decomposition where each layer may

have a number of processors addressing different grid blocks. The Message Passing Interface library (MPI)

is used for communication that is portable across many platforms. With this approach, NIMROD has demonstrated

near ideal parallel scaling in production computations. An example is shown in Figure 4. Speed-up from the two types of decomposition

performed in tandem is essentially the product of the speed-up due to each

acting separately.

From

inception, NIMROD has been developed for implementation on distributed memory

architectures. Grid blocks allow decomposition

of the poloidal plane, and Fourier components are allocated to different layers

of processors, providing three-dimensional decomposition where each layer may

have a number of processors addressing different grid blocks. The Message Passing Interface library (MPI)

is used for communication that is portable across many platforms. With this approach, NIMROD has demonstrated

near ideal parallel scaling in production computations. An example is shown in Figure 4. Speed-up from the two types of decomposition

performed in tandem is essentially the product of the speed-up due to each

acting separately.

The NIMROD code has been extensively benchmarked

against calculations from several existing fusion codes, such as DEBS, GATO,

FAR, MARS and M3D. Validation problems

include linear ideal and resistive MHD instabilities in slab, cylindrical, and

toroidal geometry, resistive ballooning modes, nonlinear resistive tearing

modes, nonlinear kink-ballooning modes, and linear and nonlinear waves and instabilities

using two-fluid extensions. NIMROD is

presently being applied to study the dynamics of spheromaks [Sovinec01], RFPs

[Sovinec98], and tokamak experiments such as DIII-D at General Atomics in San

Diego.

The NIMROD code has been extensively benchmarked

against calculations from several existing fusion codes, such as DEBS, GATO,

FAR, MARS and M3D. Validation problems

include linear ideal and resistive MHD instabilities in slab, cylindrical, and

toroidal geometry, resistive ballooning modes, nonlinear resistive tearing

modes, nonlinear kink-ballooning modes, and linear and nonlinear waves and instabilities

using two-fluid extensions. NIMROD is

presently being applied to study the dynamics of spheromaks [Sovinec01], RFPs

[Sovinec98], and tokamak experiments such as DIII-D at General Atomics in San

Diego.

2.3.2 The M3D Code

Multilevel

3D (or M3D)[Park99] is a parallel code that is especially suited for geometries

with inherently three-dimensional boundaries, e.g. stellarators, but can also

be used to simulate axisymmetric devices. M3D consists of two parts, a mesh module and a physics module.

The mesh module contains the grid, implementation of differential and integral

operators, I/O, and inter-processor communication. The physics module handles time advancement of the equations and

contains a hierarchy of physics levels that can be invoked to resolve increasingly

complete phase-spaces, and therefore provide increasing realism. The module includes

resistive MHD, two-fluid, and kinetic particles. Electrons are represented as a fluid with an approximate fluid

closure. M3D uses a stream function/potential

representation for the magnetic vector potential and velocity that has been designed

to minimize spectral pollution. Parallel

thermal conduction is simulated with the "artificial sound" method

[Park86]. The solution algorithm is quasi-implicit in that only the most

time-step limiting terms including the compressional Alfvén wave and field diffusion

terms are implemented implicitly, with explicit time stepping used for the remaining

terms.

Multilevel

3D (or M3D)[Park99] is a parallel code that is especially suited for geometries

with inherently three-dimensional boundaries, e.g. stellarators, but can also

be used to simulate axisymmetric devices. M3D consists of two parts, a mesh module and a physics module.

The mesh module contains the grid, implementation of differential and integral

operators, I/O, and inter-processor communication. The physics module handles time advancement of the equations and

contains a hierarchy of physics levels that can be invoked to resolve increasingly

complete phase-spaces, and therefore provide increasing realism. The module includes

resistive MHD, two-fluid, and kinetic particles. Electrons are represented as a fluid with an approximate fluid

closure. M3D uses a stream function/potential

representation for the magnetic vector potential and velocity that has been designed

to minimize spectral pollution. Parallel

thermal conduction is simulated with the "artificial sound" method

[Park86]. The solution algorithm is quasi-implicit in that only the most

time-step limiting terms including the compressional Alfvén wave and field diffusion

terms are implemented implicitly, with explicit time stepping used for the remaining

terms.

M3D

has several mesh modules: the original structured module uses radial finite

differences and is spectral in poloidal and toroidal angles. Another mesh module, suitable for tokamaks,

has triangular and quadrilateral finite elements in the poloidal plane and is

spectral in the toroidal angle.

Finally, there is the three-dimensional mesh module, which has been

fully implemented with 3D MPI based domain decomposition for MPP platforms. This is the version to be further developed

in this proposal.

M3D

has several mesh modules: the original structured module uses radial finite

differences and is spectral in poloidal and toroidal angles. Another mesh module, suitable for tokamaks,

has triangular and quadrilateral finite elements in the poloidal plane and is

spectral in the toroidal angle.

Finally, there is the three-dimensional mesh module, which has been

fully implemented with 3D MPI based domain decomposition for MPP platforms. This is the version to be further developed

in this proposal.

A three-dimensional mesh is utilized to

facilitate the resolution of multi-scale spatial structures, such as reconnection

layers and to accommodate fully three-dimensional boundary conditions that

occur in stellarators or the evolving free boundary of a tokamak bounded by a

separatrix. The mesh uses unstructured,

3D piecewise-linear triangular finite elements in the poloidal sections. The domain decomposition consists of slicing

the toroidal geometry into a set of poloidal planes with each poloidal plane

further partitioned into equal area patches.

One or more of the poloidal patches are assigned to each processor. The fluid part of each time step consists of

uncoupled 2D scalar elliptic equations that are solved concurrently within each

poloidal plane. The PETSc library has

been used extensively to provide a portable, efficient parallel implementation

for the elliptic equations that need solution at each time step. These are

solved with a Krylov accelerated iterative scheme that uses the overlapped

Schwarz method for preconditioning.

This leads to excellent parallel scalability.

A three-dimensional mesh is utilized to

facilitate the resolution of multi-scale spatial structures, such as reconnection

layers and to accommodate fully three-dimensional boundary conditions that

occur in stellarators or the evolving free boundary of a tokamak bounded by a

separatrix. The mesh uses unstructured,

3D piecewise-linear triangular finite elements in the poloidal sections. The domain decomposition consists of slicing

the toroidal geometry into a set of poloidal planes with each poloidal plane

further partitioned into equal area patches.

One or more of the poloidal patches are assigned to each processor. The fluid part of each time step consists of

uncoupled 2D scalar elliptic equations that are solved concurrently within each

poloidal plane. The PETSc library has

been used extensively to provide a portable, efficient parallel implementation

for the elliptic equations that need solution at each time step. These are

solved with a Krylov accelerated iterative scheme that uses the overlapped

Schwarz method for preconditioning.

This leads to excellent parallel scalability.

The M3D Team has a broad experience base in using the extended MHD formulation in fusion simulations [Sugiyama00]. The M3D Team also has significant experience with kinetic formulations including fluid bulk species plus a simulation-particle energetic ion population, a hybrid formulation with fluid electrons and simulation-particle ions [Fu95]. Historically, the M3D formulation was the first to identify a new nonlinear disruption mechanism in the TFTR high-power DT fusion experiments [Park95]. M3D has also been used to study disruption prevention techniques such as massive impurity injection [Strauss98].

2.3.3 Required

Computational Resources

We can estimate the computational resources required to

carry out the necessary simulations for parameters typical of present and

proposed experiments as shown in Table I. TABLE I: Typical dimensionless

parameters for present and proposed

experiments

|

parameter |

name |

CDXU |

NSTX |

CMOD |

DIII-D |

FIRE |

ITER |

|

R (m) |

Major radius |

0.3 |

0.8 |

0.6 |

1.6 |

2.0 |

5.0 |

|

Te [keV] |

Elec. Temp. |

0.1 |

1.0 |

2.0 |

2.0 |

10 |

10 |

|

b |

Plasma beta |

0.01 |

0.15 |

0.02 |

0.04 |

0.02 |

0.02 |

|

S1/2 |

Inv resis. length |

200 |

2600 |

3000 |

6000 |

20,000 |

60,000 |

|

(r*) –1 =Ba/T1/2 |

Ion number |

40 |

60 |

400 |

250 |

500 |

1,200 |

|

a/le |

Recip. norm elec skin depth |

250 |

500 |

1000 |

1000 |

1500 |

3000 |

Estimates based on explicit time-stepping with no grid refinement: Let us first estimate the computational requirements for a 3D calculation with uniform zoning of size Dx and a explicit time-stepping scheme based on the CFL criteria for the poloidal Alfven wave, i.e. Dt = Dx / VAP. For a 3D mesh of linear dimension N, i.e., N3 mesh total mesh points, it would take N time steps to calculate one Alfven wave transit time tA = a / VAP. Typical ideal and resistive MHD instabilities would grow on the timescales TIDEAL ~ b-1/2 tA and TRESIS ~ S1/2 tA , requiring about b-1/2 N and S1/2 N time-steps, respectively. Thus, the total number of space-time points required to compute an ideal or resistive instability would be about b-1/2 N4 (ideal) and S1/2 N4 (resistive)

Current experience shows that with real performance of about 100 MFLOPS/processor and of order 1000 processor-hours, we can compute a problem with 1003 mesh points for 10**4 time steps, using a complex fluid model with the compressional wave and field terms implemented implicitly. This is about 1010 space-time points in 3 ´ 1014 operations. This is easily sufficient resolution and timesteps to calculate an ideal mode in CDX-U, and is nearly sufficient to study the initial growth phase of a nonlinear tearing mode. We see this since the number of linear mesh points is comparable to: the linear tearing-layer width, S½, the ratio of the system size to the ion Larmor radius r*, and the ratio of the system size to the electron collisionless skin depth, a/le. These are the relevant lengths that enter the two-fluid XMHD model.

The question is: what type of computer power is needed to study this physics in a larger, hotter device with a stronger magnetic field? We see from Table I. that depending on what scale length needs to be resolved, the number of mesh points in a linear direction will increase by about an order of magnitude as we go from CDX-U to DIII-D. The increase in the total number of space-time points would be the fourth power of this factor times another scaling factor that will between unity (for ideal scaling) to about 10 (for resistive scaling). Thus, the number of space-time points required would increase anywhere from 104 to 105. Running on a 10 Teraflop (delivered) computer for 3 days would correspond to about 3 ´ 1018 floating point operations which would be about 104 times greater than what was quoted above for what is available to us today, so a full DIII-D calculation might be feasible with this hardware increase alone.

Grid refinement, implicit time stepping and improved algorithms: It is straightforward to see that the above scaling estimates can be gross overestimates if we take into account improved algorithms and meshing. For example, for a field-line following mesh and with adaptive mesh refinement, the total number of mesh points should only have to grow linearly as we go to larger machines and higher resolution, rather than cubic. With implicit time differencing, the time step will not have to decrease nearly as fast as linear with zone size. More efficient solvers can give an additional factor. Thus, with these computational improvements, some of which have already been implement to varying degree in M3D and NIMROD, we can realistically expect to be able to calculate modes using XMHD models in DIII-D, NSTX, and CMOD in the time frame of this proposal, and even FIRE and ITER calculations might be within reach. Of course, it also may not be necessary to simulate the exact parameters of a machine if we can determine scaling relations from doing a series of calculations at reduced parameters.

Hybrid particle/fluid models will take additional resources, methods such as df are very efficient in that they only represent the difference from a Maxwellian as particles. One of our primary goals is to develop accurate and efficient models with which we can do meaningful calculations on the computer power available to us.

3. Research Design and Methods

Since many physical effects with radically different time and spatial scales contribute to the overall behavior of modern fusion experiments, predictive macroscopic simulation capability requires a sophisticated numerical approach based on an optimal physics-model formulation that is developed with a vision toward harnessing tremendous computing resources. The proposed center will create a unique opportunity to meet the challenges of macroscopic fusion simulation by synthesizing the individual elements introduced in Section 1.4. The goal for each element is summarized as follows:

· Code Development—the spatial and temporal stiffness of models describing macroscopic plasma behavior defies straightforward numerical implementation, irrespective of any conceivable level of computing power. The code development component of CEMM will employ the best numerical methods for solving the extended-MHD fluid models recommended by our theoretical component.

· Model Development—fluid models and closure relations derived from the fundamental kinetic description for plasmas acquire different forms under different approximations and manipulation. The theoretical component of CEMM will analytically refine the fluid model, and the heat-flux and stress-tensor closure terms describing kinetic effects, for optimal accuracy and numerical tractability, and will define test problems with analytic solutions for code validation.

· Visualization and Data Management—successful simulation of macroscopic plasma behavior requires successful diagnosis of results before a better physical understanding can be achieved. The visualization and data management component of CEMM will seek to extract the maximum amount of information possible and to provide a convenient means for data access, storage, and comparison with experimental results.

· Computer Science Enabling Technologies (CSET)—with the multitude of challenges confronted in macroscopic plasma simulation, code efficiency is a paramount issue. The CSET component of CEMM relies on several computer science projects that focus on parallel algorithm optimization and managing code complexity. We include in this category code support and dissemination.

· Applications and Validation—This is an aggressive program to pioneer new applications enabled by the new capabilities, to compare the results of the two codes NIMROD and M3D with one another and with experimental data.

Each component is an essential ingredient of the unique, unified technical effort of the proposed CEMM. Development and deployment of the two complementary simulation codes are viewed as the critical-path elements for the first years of CEMM. This is reflected in the proposed budget allocations for the NIMROD and M3D groups. CEMM will primarily rely on existing programmatic fusion funding for its theoretical support and on associated OSCAR funding for the CSET activities.

The remainder of Section 3 describes the technical approach that will be applied by each of the individual CEMM components and the management plan for coordination.

3.1 Code

Development

We are proposing a major expansion of physics simulation capabilities for both the NIMROD and M3D codes through three strategic areas of code development: 1) expanded use of implicit techniques, 2) kinetic closures for majority species, and 3) improved and adaptive meshing capabilities. The first will facilitate practical computation with the two-fluid model and with strong advection. The second will implement the kinetic based closures described in Sections 3.2.2 and 3.2.3. The third area will facilitate efficient computation of the extreme difference in spatial scale lengths arising in extended-MHD physics. These developments will draw from advances in the CSET products as indicated below. Furthermore, modular coding will be pursued to make the same advances accessible to both codes.

3.1.1 Expanded Use of Implicit Techniques

The stiff system of equations describing extended-MHD cannot be addressed in a purely explicit manner. Our approach to this computational challenge is the use of implicit and semi-implicit methods in the advance of the solution field. For single-fluid dynamics, the linearized ideal MHD system of equations is self-adjoint, leading to a Hermitian numerical operator. However, effects beyond the scope of the basic single-fluid model make the system non-self-adjoint. Therefore, modeling these effects in large-scale simulations without prohibitive restrictions on time step will require the solution of non-Hermitian matrices. The objectives for this development area are:

·

Revise the

magnetic field advance to improve treatment of the Hall term.

A symmetric numerical operator is presently used in NIMROD to make the Hall advance numerically stable at large time step. Though this form is consistent with the partial differential equations, at the time steps needed for modeling macroscopic fusion phenomena, it leads to substantial inaccuracies [Harned89], including artificially large magnetic reconnection rates. With capabilities for solving non-Hermitian matrices, a more accurate semi-implicit scheme is available [Harned89]. It uses a fourth-order in space, second-order in time numerical operator derived using the method of differential approximation discussed in [Caramana91]. The finite element representations in M3D and NIMROD are suitable for derivatives of order 2 or less, so this scheme requires an auxiliary vector that represents second derivatives of the new magnetic field, while the advance uses second derivatives of the auxiliary vector. Since this operator is based on a linearized Hall term, electron viscosity will be needed for nonlinear applications to truncate power flow at the smallest resolved scales.

·

Incorporate

gyroviscosity free of time-step restriction.

The magnetic moment of charged particles gyrating about a

magnetic field leads to collision-independent terms in the stress tensor. Their coefficients have the same ![]() dependence as the

diamagnetic drifts induced by the Hall term in the presence of an electron

pressure gradient and should therefore be included when the two-fluid model is

used. The nonsymmetrical form of these

terms implies use of a non-Hermitian operator for numerical stability at large

time step.

dependence as the

diamagnetic drifts induced by the Hall term in the presence of an electron

pressure gradient and should therefore be included when the two-fluid model is

used. The nonsymmetrical form of these

terms implies use of a non-Hermitian operator for numerical stability at large

time step.

·

Incorporate

implicit advection.

The advective terms (![]() ) in the set of fluid equations are presently treated with an

explicit predictor/corrector algorithm [Lionello99] in the NIMROD code, where numerical

stability restricts the time step to the Courant-Friedricks-Lewy condition,

) in the set of fluid equations are presently treated with an

explicit predictor/corrector algorithm [Lionello99] in the NIMROD code, where numerical

stability restricts the time step to the Courant-Friedricks-Lewy condition, ![]() . Though NIMROD has

been successfully applied to nonlinear conditions where flows have reached several

tenths of the Alfvén speed, numerical stability greatly restricts the time

step. This can be prohibitive at high

S-values, where fine mesh spacing is required near resonant surfaces. An implicit algorithm for flow can remove

the numerical stability restriction, and this will also lead to non-Hermitian

matrices in the numerical equations.

. Though NIMROD has

been successfully applied to nonlinear conditions where flows have reached several

tenths of the Alfvén speed, numerical stability greatly restricts the time

step. This can be prohibitive at high

S-values, where fine mesh spacing is required near resonant surfaces. An implicit algorithm for flow can remove

the numerical stability restriction, and this will also lead to non-Hermitian

matrices in the numerical equations.

·

Optimize parallel algorithms for solving

non-Hermitian matrices.

We expect that iterative methods based on the Krylov subspace will provide the most efficient means for solving the non-Hermitian matrices envisioned for XMHD modeling. We have previously coupled NIMROD to the AZTEC parallel solver library [http://www.cs.sandia.gov/CRF/aztec1.html], and M3D uses the PETSc parallel solver library [http://www-fp.mcs.anl.gov/petsc/index.html]. Both of these libraries offer GMRES and transpose-free QMR. Experience with NIMROD has shown that preconditioners developed specifically for our application outperform those available in the AZTEC library [Plimpton99]. We therefore plan to use a new AZTEC capability of calling user-supplied preconditioning routines during the iterations. In addition, the anticipated collaboration with the TOPS topical center will help us optimize use of PETSc.

3.1.2 Kinetic

Closures for Majority Species

The two proposed methods for incorporating kinetic effects into the fluid equations are similar in their use of trajectory tracking to accumulate nonlocal contributions to the closure terms. They will therefore use the same parallel particle-tracking algorithm, though information gathered from it will differ in the two approaches. The tracking of trajectories is a numerical prerequisite for either closure scheme.

The objectives for this development area are:

·

Implement a

modular and efficient search algorithm.

Although trajectory tracking is common in simulations using uniform grids, it presents algorithmic challenges in highly non-uniform and/or unstructured finite element meshes. An approach already used in M3D for energetic ions tracks particle trajectories on a structured mesh only, and fields are interpolated from the nonuniform mesh to the uniform particle grid. NIMROD is presently pursuing a different approach, where particles are tracked in the nonuniform finite element mesh. Here, an efficient search algorithm is the most important requirement. We have begun implementing an approach where the vertices of each finite element are mapped from real-space coordinates to logical-space coordinates [Alejandro97], creating a look-up table for locating positions. We intended to compare efficiency and accuracy of these different approaches and then pursue the most promising of the two.

·

Implement kinetic

closures based on simulation-particles.

In this approach, df is computed along sample trajectories in phase space that coincide with the evolution of a random initial distribution of simulation particles. Particle trajectories are influenced by the time-evolving magnetic and electric fields, and the equation for each df sample uses the local fluid moment information along its trajectory. This information is gathered in statistical forms of the closure relations that are used in the advance of the fluid moments. Calculating df along each trajectory and the statistical closure relations are straightforward in the explicit time-advance envisioned for the ion species. Computational practicality then depends on the parallel efficiency of particle tracking and on the gather/scatter operations relating field and particle information.

·

Implement kinetic

closures based on the CEL approach.

The expression for heat flux that is based on the analytic CEL expansion for df [Held01] will be incorporated for simultaneous evolution with the fluid moments. The same trajectory-tracking algorithm used for simulation-particle closures will be used for the required parallel integrations. Numerical research will focus on the stiffness associated with the rapid parallel communication. We will explore a semi-implicit approach, where an explicit CEL computation of the kinetic heat flux is numerically stabilized by a 3D thermal conduction operator with local anisotropic diffusivity. Similar developments will be explored for the parallel parts of the electron stress tensor.

3.1.3 Mesh methods

With different length-scales being important in different regions of the macroscopic computational domain, efficiency is strongly dependent on effective grid layout. We will pursue improvements in mesh generation, 3D field-line following meshes, and mesh adaptivity to achieve optimal spatial resolution performance.

The objectives for this development area are:

·

Improve mesh

generation with both closed and open magnetic surfaces.

To treat Edge-Localized Modes (ELMs), it is necessary to include the magnetic field separatrix between the closed magnetic surfaces of the core, and the open surfaces of the vacuum region. Progress has been made in this area [Strauss96], but a more general method of mesh generation is needed that produces a mesh aligned with magnetic surfaces near an X point of the poloidal magnetic field.

·

Implement a

field-aligned mesh.

M3D is able to use different grid geometries on each poloidal plane. This gives the possibility of a field-line following mesh, which has been used successfully in gyrokinetic simulations [Lin00]. Each meshpoint is displaced from its position on the previous poloidal plane by integrating along a field line of the equilibrium magnetic field. The displacement can be slightly altered to ensure that after a full toroidal rotation, each meshpoint coincides with a meshpoint of the initial poloidal plane. If the magnetic field has significant shear, the cells of the mesh will become highly distorted. This can be avoided by reconnecting triangular mesh cells, to get a good quality mesh. An advantage of a field line following mesh is that, since unstable modes tend to have little structure along field lines, the mesh can be very efficient for representing the modes. This is particularly important for short wavelength modes, such as occur in ballooning mode turbulence, or in a turbulent 3D reconnection layer. Another possible advantage is in parallel thermal transport along the magnetic field, by decreasing cross-field aliasing.

·

Investigate mesh

adaptivity.

As we move to a more sophisticated and computationally intensive modeling, we will have to deal with extremely fine scale dynamical processes. For simple resistive reconnection, the reconnection layer can be resolved by a simple refinement in a radial flux coordinate. When more physics is included, it may be necessary to resolve fine scale structure occurring in the reconnection layer. To deal with this, one needs to refine the mesh in the poloidal angle, and perhaps also in toroidal angle. This can be done readily with a mesh of triangular and rectangular elements. As a first step, the refinement can be done statically because the magnetic field does not change a great deal, even during reconnection. Later, we will refine the mesh dynamically. The main difficulty is to distribute the mesh across processors to maintain load balance. In this area, we expect to benefit from the research program of the Terascale Simulation Tools Technology Center.

3.2 Model Development

Comprehensive

macroscopic plasma simulation requires assembling analytic and numerical

techniques to extend large-scale fluid computation to include two-fluid and kinetic

effects. In contrast to resistive MHD,

two-fluid modeling is not well established, even without kinetic effects. This model is required for typical tokamak

parameters, where thermal speeds exceed bulk fluid motions. Here, thermally induced particle drifts lead

to significant differences between electron and ion fluid motions perpendicular

to the magnetic field. Many different

formulations describing this ordering of terms have been derived [Hazeltine92],

but until recently, few have been successfully implemented for large-scale

nonlinear simulation [Sugiyama00]. The

goal of theoretical model development for this level is to refine the

formulation for computational tractability.

Particular attention will be paid to terms like

"gyroviscosity" arising in the stress tensor due to spatial

variations of particle magnetic moments.

In this case, analytic cancellation with advective terms (of the form ![]() ) can lead to variants of the two-fluid model that are easier

to solve numerically [Sugiyama00].

) can lead to variants of the two-fluid model that are easier

to solve numerically [Sugiyama00].

Important kinetic

effects that require numerical modeling also arise in macroscopic behavior. In some cases, the important behavior

involves a minority population of energetic ions, where large gyro-orbit

effects change the nature of macroscopic MHD modes. Simulation particles have already been used in M3D to model such

cases [Fu95]. Models and approaches

used for the energetic ion component lay a foundation for a more comprehensive

level, where the majority species fluid equations are closed with numerical

computations of heat flux and stress.

This is required for simulating the nonlocal effects associated with the

free streaming of majority-species particles parallel to magnetic field lines,

such as the neoclassical effects described earlier, and for large gyro-orbit effects

of the majority ion population.

Numerical methods for this comprehensive level of nonlinear macroscopic

simulation are in their infancy. It

will therefore receive the largest share of the model development efforts of

CEMM, using the approaches outlined in Sections 3.2.1-3.2.3.

3.2.1 Kinetic Modeling Framework

Parallel particle streaming and large ion gyro-orbits lead to important kinetic effects in fusion plasmas; these effects may often be described by small deviations from a Maxwellian distribution that is parameterized by density and temperature profiles. This concept has led to improved numerical performance of particle-based simulation of microscopic plasma behavior [Parker93], and it is used in the M3D simulations for energetic ions [Fu95]. It also serves as the framework for a more general kinetic macroscopic simulation, but the Maxwellian distributions must evolve in time to keep kinetic deviations small. The fluid equations with closure information from the kinetic distortions will be used to advance the Maxwellian distributions, while the kinetic distortions (df) are advanced using the fluid moment variations encountered along particle trajectories. There are two realizations of this approach: one integrates the df distribution function along a sample set of characteristics, and the other uses an analytic Chapman-Enskog-Like (CEL) expansion of the kinetic distortion in velocity-space. [Chang92] These approaches are complementary in that the former is more appropriate for describing ion kinetics, while the latter is better suited for electron kinetics. Together, they will provide comprehensive kinetic modeling that can be included in macroscopic plasma simulations.

3.2.2 Kinetic Modeling through Simulation Particles

In this approach, trajectories in phase space are evolved numerically, subject to the electric and magnetic forces encountered by a physical particle, except for gyro-orbit motions that are included through the numerical averaging of gyrokinetics [Lee88]. The trajectories serve as characteristics where the kinetic distortion, df, is computed from the fluid moments that are encountered. The heat flux and stress tensor at a physical location are then computed statistically from the characteristics in the vicinity of that physical location at a given point in time. Heat flux for species a, for example, appears as

(1)

(1)

where ![]() is a shape function

for simulation particle j, wj is the difference between the particle's velocity and

the local fluid velocity for species a,

and s is a normalization factor. Equation (1) has the same form as the

analytic definition of heat flux, except (1) contains a correction term to eliminate

heat flux associated with numerical error.

This computation is then used in the advance of the fluid pressure for

species a, and a similar term for

kinetic stress appears in the fluid velocity equation. Other than these closure terms, the fluid

advance is the same as what is used for the two-fluid model without kinetic

effects; the fluid part of the algorithm is fundamentally unchanged. This was the intent of early formulations of

this evolving-Maxwellian/df approach.

[Nebel94].

is a shape function

for simulation particle j, wj is the difference between the particle's velocity and

the local fluid velocity for species a,

and s is a normalization factor. Equation (1) has the same form as the

analytic definition of heat flux, except (1) contains a correction term to eliminate

heat flux associated with numerical error.

This computation is then used in the advance of the fluid pressure for

species a, and a similar term for

kinetic stress appears in the fluid velocity equation. Other than these closure terms, the fluid

advance is the same as what is used for the two-fluid model without kinetic

effects; the fluid part of the algorithm is fundamentally unchanged. This was the intent of early formulations of

this evolving-Maxwellian/df approach.

[Nebel94].

Model development will focus on outstanding issues that need attention before practical simulation is possible. These are 1) the filamentation of the df distribution over transport time scales, 2) the accumulation of low order moments in the df distribution due to statistical errors, and 3) the stiffness arising from unrestrained parallel motions. The first two issues improve with increasing phase space sampling, i.e. very large numbers of computed trajectories (107 is now common in microscopic modeling, and Terascale computing can bring this to 109). However, resolving the filamentation issue will need some form of collision modeling [Brunner99]. In addition, numerical corrections to eliminate low-order moment accumulation have been tested by CEMM Co-PI Sovinec and will be further investigated as model development. The stiffness issue may ultimately require implicit techniques, especially for electrons. However, ion kinetic effects arise in important macroscopic behavior where parameters are tractable with explicit computation. For this reason, the simulation-particle approach will first be applied for studying ion kinetic effects. The complementary CEL expansion described next will first be applied for studying electron kinetic effects.

3.2.3 Kinetic Modeling through Chapman-Enskog-Like Closure

The proposed CEL approach to modeling kinetic physics differs from simulation-particle closure in that the kinetic distortions are determined from analytic solutions of gyro-averaged drift-kinetic equations. These solutions are obtained via polynomial and/or rational function expansions in velocity space. In contrast to classical, collisional Chapman-Enskog closure theory, which is inappropriate for high-temperature plasmas [Chapman39], the proposed CEL expansion is based on the small parallel gradients of the evolving Maxwellian distribution. The advantage of this method is that the closure terms at a given position are expressed in terms of a single numerical integration covering parallel motion, rather than many sample phase space contributions summed statistically. The disadvantage is that analytically accounting for perpendicular gradients is more difficult. These tradeoffs make the CEL approach well suited for computation of electron kinetic effects, where fast parallel motions are paramount. Electron heat flux computations based on the proposed CEL expansion have been formulated and implemented for macroscopic simulation [Held01]. The required parallel integrations use the same trajectory-tracking algorithm that is employed for simulation-particle tracking.

Model improvements here will concentrate on developing the

CEL electron stress tensor closure to model perturbed neoclassical effects,

including the mechanisms underlying the neoclassical tearing mode described in

Section 2. Additional developments seek

to incorporate electron and ion drift phenomena. Nevertheless, it is anticipated that simulating ion gyro-orbit

effects with simulation-particle closures and nonlocal, parallel electron

dynamics with CEL closures will provide the most effective use of large-scale

computation.

3.3 Visualization and Data Management

Improvements in plasma diagnostic techniques have made it increasingly feasible to demonstrate excellent correlations between experimental results and theoretical models. In order to maximize the effectiveness of simulation/experiment comparisons, it will be necessary to address critical computer science and enabling technology issues in the area of data management and visualization. The power of advanced computing to solve critical plasma science problems can be fully exploited only if a capable infrastructure is established and effective software tools are made available. Such tools should include standardized data structures and access methods, synthetic diagnostics allowing comparisons to experimental diagnostics, standard analysis and visualization utilities, and common code interfaces. In a broader sense for the entire national fusion community, these issues will be addressed in the National Fusion Collaboratory discussed in Section 3.4. Specific to this proposal, we will address near term data management and visualization issues directly related to NIMROD and M3D.

We will build on and greatly expand a pilot project begun under PSACI funding, to create the infrastructure to store results of NIMROD and M3D into the MDSplus data management system. MDSplus is a client/server system that has been used in the experimental community (C-Mod, DIII-D, NSTX) to provide a common, shared, secured, network interface to all data. Storage of our simulation results in MDSplus will greatly facilitate their comparison to experimental data and will aid in the validation of code results as well as the analysis of experimental data.

Accompanying the deployment of an MDSplus based data storage system will be the development of a database management system, which keeps track of this data, supports searches of the database, and provides a convenient interface to the data manipulation and visualization system described below. The Microsoft SQL Server is being used in the experimental community to catalogue large experimental code runs. This system will be extended to allow tracking of runs by the two MHD codes that will be coupled to MDSplus. Management tools will be developed that will work with the anticipated high dimensional data objects which will be critical to the success of our research. Such management will include a high degree of feedback and iteration between data and experimental model. Comparison of datasets will be critical so that simulations can be validated against experimental data.

Presently the plasma sciences are doing interactive visualization of three-dimensional objects such as the temporal evolution of the plasma temperature profile. As our research moves towards higher dimensional data objects an exploration tool will be created to allow easy visualization of this complex data. This tool will be used to find correlations, to visualize subspaces, to find data that is characterized by a particular program or formula, and mapping between relational, object oriented, and other databases. Such tools will need to provide operations or methods over the data such as spatial proximity, "like images," or subsets of arrays. These capabilities will be fundamental to comparing experimental data with simulation results thereby allowing validation of any theoretical model. Finally, such complex visualization must analyze efficiency issues related to searching, optimized performance over networks, and organization of data based on access efficiency needs.

3.4 Computer Science Enabling Technologies

We will interface with existing and proposed computer science and numerical analysis efforts to make improvements in linear algebra, visualization, code management, and data management capabilities of the NIMROD and M3D codes.

We have agreed to collaboration with the Terascale Simulation Tools and Technologies (TSTT) Center with Dr. James Glimm as Principal Investigator. The TSTT Center will focus on the incorporation of “standard” grid generation and discretization libraries into the M3D code, and possibly the NIMROD code. This will greatly simplify code structure and subsequent code development and will enable the seamless incorporation of higher-order and other advanced capabilities in these areas. We will also explore with them the feasibility of combining potential and field advance equations to increase both the accuracy and efficiency of both.

We will also use developments from the Terascale Optimal PDE Simulations (TOPS) Center with Dr. David Keyes as the Principal Investigator. The TOPS Center proposal will focus on extending the sparse matrix solvers in PETSc in several ways that will improve the efficiency of M3D. One of their primary goals is the development of multilevel solvers that handle the full multicomponent coupling of stiff systems of PDEs. Included in this is the addition of nonlinear Schwarz domain decomposition, algebraic multigrid methods, and refinements in the implementation of several PETSc routines to improve their cache utilization and hence performance.

We are also participating in a CSET proposal on adaptive algorithms and high performance software submitted with Dr. Phil Collela as Principal Investigator, called “An Algorithmic and Software Framework for Applied Partial Differential Equations”. We will work with them to develop an adaptive mesh refinement (AMR) capability and to perform and evaluate comparison tests with M3D and with NIMROD.